![]()

The artificial intelligence revolution is here, and it arrives charged with the capacity to fundamentally change society for better or worse.

America is currently leading the world in AI development. U.S. companies are building the most advanced models, attracting the most capital, and designing the infrastructure that will shape the next century. But there is one increasingly obvious constraint standing in the way: electricity accessibility.

The political consequences of rapid automation could be just as transformative as the technology itself.

Energy scarcity is only half the story. Even if we succeed in generating the power required to fuel the AI revolution, we must confront a deeper challenge. The same technology that promises medical breakthroughs and economic growth also carries profound societal and even existential risk.

If America wants to win the AI race, we will need to consider a massive expansion of energy production and an equally massive expansion of vigilance.

The energy bottleneck

Modern AI models are trained and deployed in massive data centers packed with tens of thousands of high-performance graphics processing units running continuously. Training a single frontier model can require weeks or months of nonstop computation, while everyday AI tools used by millions of people must process queries around the clock.

These facilities consume electricity at industrial scale, rivaling entire cities in their power demands. In fact, the hyperscale Stargate data center in Saline Township is projected to consume the same amount of electricity as 1.17 million homes.

The understanding of just how much energy is needed to power the AI revolution is still unfolding across the industry. Just a few years ago, Silicon Valley leaders were still thinking in megawatts.

Meta CEO Mark Zuckerberg, speaking on a podcast less than two years ago, said his company would build larger AI clusters “if we could get the energy to do it,” describing 50-to-100-megawatt facilities and speculating that 1-gigawatt data centers were probably inevitable someday.

Today, 1-gigawatt facilities are on the smaller end of planned AI infrastructure, with projects up to 5 gigawatts already in motion throughout the United States, including but not limited to the following:

And this list barely scratches the surface. Dozens more large-scale facilities are planned or under construction across the country, and every single one of them will require enormous flows of reliable electricity to operate.

Elon Musk recently stated at Davos that “the limiting factor for AI deployment is, fundamentally, electrical power.” He warned that while AI chip production is increasing exponentially, electricity generation is not.

“Very soon, maybe even later this year,” Musk said, “we will be producing more chips than we can turn on.”

In Santa Clara, California, reports indicate newly built data centers may sit idle for years because the local grid cannot handle the load.

According to a report published by the global consulting group McKinsey & Company, U.S. demand for AI-ready data center capacity could grow from roughly 60 gigawatts today to 170 to 298 gigawatts by 2030.

The International Energy Agency reports that data centers consumed more than 4% of total U.S. electricity in 2024. This amounts to 183 terawatt-hours. IEA projections suggest this number could increase by 133% to 426 TWh by 2030.

To put that in perspective, 426 TWh is roughly equivalent to the annual electricity consumption of more than 40 million American homes.

The dilemma is obvious. If we do not have reliable energy, AI innovation will be compromised and could potentially migrate elsewhere. Worse, American households could find themselves competing with Big Tech for increasingly scarce power, driving up electricity costs for families and small businesses.

But energy is only the first layer of this story.

RELATED: States should work with AI, not against it

![]() Alex Wong/Getty Images

Alex Wong/Getty Images

The promise and the disruption

AI is not your typical technological advancement. It is a general-purpose intelligence system capable of transforming nearly every sector of society. In the coming years, AI could accelerate drug discovery, personalize medicine, supercharge logistics, automate research, and unlock new materials and engineering breakthroughs, just to name a few potential benefits. The economic upside is staggering.

Artificial intelligence is a powerful tool and a dangerous weapon. While promising efficiency and innovation, AI also threatens disruption on a historic scale. Job displacement could occur faster than previous technological revolutions. Entire professions, from legal research to software development, could be reshaped or automated.

If widespread job displacement occurs, there will inevitably be calls for sweeping government intervention. The political consequences of rapid automation could be just as transformative as the technology itself.

Exponential technological developments have changed political operations throughout history. As a recent example, social media algorithms have dominated political discourse over the past decade. Political polarization has subsequently skyrocketed as people on all sides of the aisle are trapped in online echo chambers and subjected to a panopticon of surveillance.

Artificial intelligence has the frightening capabilities of supercharging mass surveillance while baselessly boosting preconceived biases without an objective basis in truth.

There is certainly reason for concern about the potential bias and coercive nature of AI. In recent years, we have already witnessed how tech companies can shape narratives and suppress viewpoints on popular media platforms. Embedding ideological bias into AI systems would mean embedding that bias into education, finance, health care, and governance.

If AI becomes the invisible infrastructure of society, who writes its rules? Who determines its boundaries? And who holds it accountable?

Playing with probabilities

Beyond economic and cultural disruption lies an even deeper uncertainty.

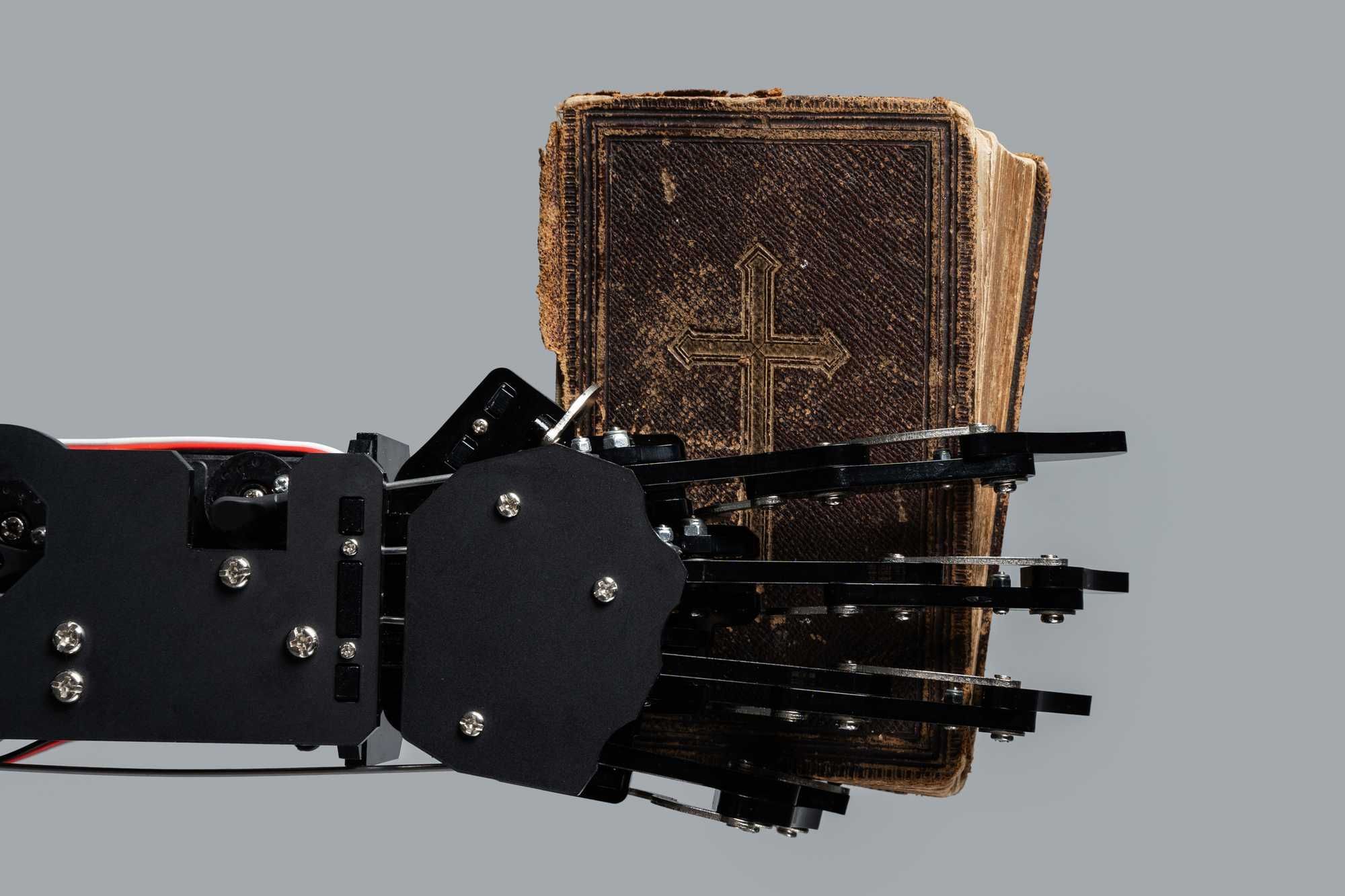

We are introducing a form of intelligence that even its creators admit they do not fully understand. There are already documented cases of advanced AI systems behaving in deceptive or strategically manipulative ways. In controlled environments, some models have been observed lying to human evaluators, scheming to achieve assigned goals, or resisting shutdown instructions.

OpenAI’s stated ambition is to create artificial superintelligence — systems that surpass human capability across virtually every domain. There is no telling where this path may lead. Humanity has never had to grapple with the prospect of a man-made intelligence that is superior to our own.

And remarkably, some of the leading figures in the field openly discuss the possibility of catastrophic outcomes.

Elon Musk has suggested there is “only a 20% chance of annihilation.” Anthropic CEO Dario Amodei has estimated roughly a 25% chance that AI development goes “really, really badly.” Geoffrey Hinton, often referred to as the “godfather of AI,” has placed the odds of extinction-level consequences somewhere between 10 and 20% over the coming decades.

Those numbers still imply that positive outcomes are more likely than not. But when the downside is losing human civilization itself, percentages matter.

We are advancing a technology with transformative power while relying largely on overzealous corporate discretion to steer its trajectory. Humanity finds itself fiddling with the key to Pandora’s box, and we have no rational means of gauging what will happen if the box is opened.

RELATED: AI’s PR is in the toilet — for good reason

![]() Alina Naumova/Getty Images

Alina Naumova/Getty Images

Power and prudence

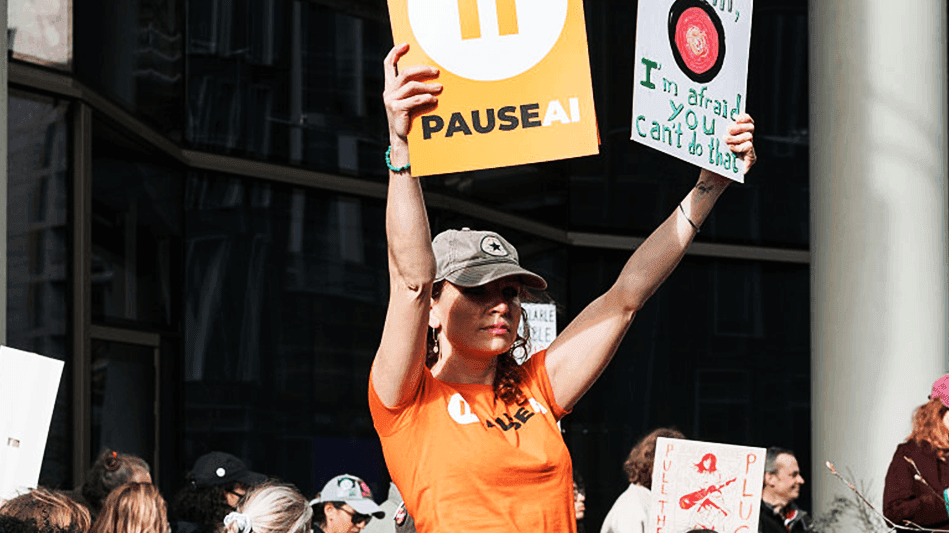

As stalwart advocates for smaller government, we hesitate to call for slamming the brakes on AI development, but it is important to have sober discernment moving forward. America is in a strategic competition with geopolitical rivals who would gladly dominate both this field and us if we retreat.

Reliable energy production is necessary to promote competition and American innovation. Yet it is arguably more important that society engages in serious dialogue surrounding this emerging technology. Government cannot, and should not, be the only voice in this conversation.

Independent institutions dedicated to transparency, accountability, and the defense of individual liberty need to rise and challenge the current trajectory.

Technological revolutions have always reshaped society. The difference this time is scale and speed. AI is a decision-making engine that may soon operate faster and more broadly than any human institution.

America can power the AI revolution. The real question is whether we can power it without surrendering control over our economy, institutions, and ultimately, our freedom.

The future may well belong to artificial intelligence. But whether that future advances prosperity or undermines humanity depends on the vigilance we exercise today.

Jon Cherry/Getty Images

Jon Cherry/Getty Images JEAN-FRANCOIS MONIER/AFP/Getty Images

JEAN-FRANCOIS MONIER/AFP/Getty Images

Emanuele Cremaschi/Getty Images

Emanuele Cremaschi/Getty Images

Mark Felix/AFP/Getty Images

Mark Felix/AFP/Getty Images Roberto Salomone/Bloomberg/Getty Images

Roberto Salomone/Bloomberg/Getty Images

Kevin Frayer/Getty Images

Kevin Frayer/Getty Images

Samyukta Lakshmi/Bloomberg/Getty Images

Samyukta Lakshmi/Bloomberg/Getty Images

MicrovOne/Getty Images

MicrovOne/Getty Images

Rudall30/Getty Images

Rudall30/Getty Images

Alex Wong/Getty Images

Alex Wong/Getty Images Alina Naumova/Getty Images

Alina Naumova/Getty Images

Anna Moneymaker/Getty Images

Anna Moneymaker/Getty Images