Biden’s COVID censorship machine takes a hit: Missouri wins landmark ban on federal threats to Big Tech

A landmark settlement delivered a blow to the censorship industrial complex that silenced Americans during the COVID era.

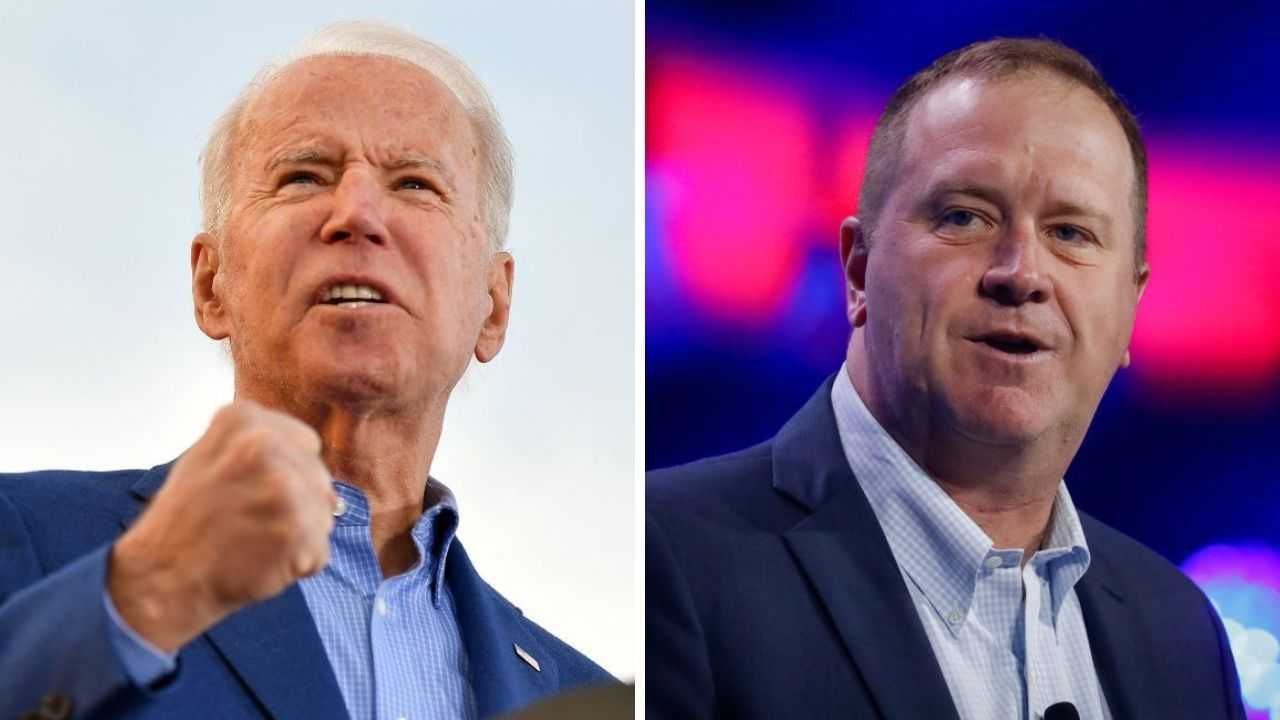

Sen. Eric Schmitt (R-Mo.) announced Tuesday that Missouri had reached a settlement agreement with the U.S. government in its Missouri v. Biden lawsuit, which accused the Biden administration of violating Americans' First Amendment rights by directing social media companies to censor speech challenging the government's COVID messaging.

'For every working Missouri family tired of being silenced by their own government: this victory is yours.'

Schmitt filed the lawsuit against the Biden administration while serving as Missouri attorney general, before securing his Senate seat.

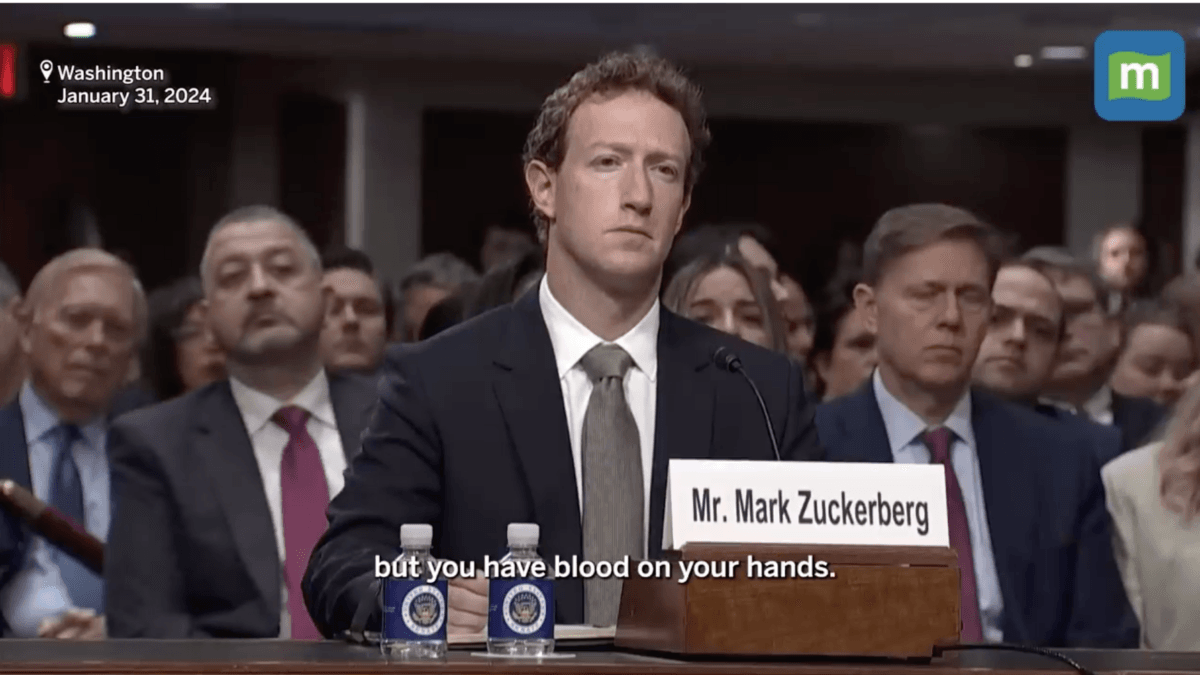

The agreement included a 10-year Consent Decree that enforces a narrow permanent injunction on the surgeon general, the Centers for Disease Control and Prevention, and the Cybersecurity and Infrastructure Security Agency. The injunction prevents them from threatening social media companies with any form of punishment if those companies fail to remove or suppress content that contains protected speech.

However, this ban applies only to posts made on Facebook, Instagram, X, LinkedIn, and YouTube by the specific plaintiffs in the case, including Missouri and Louisiana government officials and agencies acting in their official capacity. It does not extend to other social media networks or content posted by the general public.

"The Parties also agree that government, politicians, media, academics, or anyone else applying labels such as 'misinformation,' 'disinformation,' or 'malinformation' to speech does not render it constitutionally unprotected," the agreement reads.

The court must first approve this settlement agreement.

"We just won Missouri v. Biden," Schmitt wrote in a post on X. "As Missouri's Attorney General, I sued the Biden regime for brazenly colluding with Big Tech to silence Missouri families — censoring the truth about COVID, the Hunter Biden laptop, the open border, and the 2020 election. They tried to turn Facebook, X, YouTube, and the rest into their private speech police, labeling dissent 'misinformation' while they pushed their narrative on the American people."

Schmitt called the Consent Decree the "first real, operational restraint on the federal censorship machine."

He explained that it "directly binds the Surgeon General, the CDC, and CISA: no more threats of legal, regulatory, or economic punishment. No more coercion. No more unilateral direction or veto of platform decisions to remove, suppress, deplatform, or algorithmically bury protected speech."

"For every working Missouri family tired of being silenced by their own government: this victory is yours. The heartland fought back, and the heartland delivered," Schmitt concluded.

RELATED: 'Karma is a b***h': Trump taps epidemiologist targeted by Biden admin and censored online to run NIH

Benjamin Weingarten, a senior contributor at the Federalist, addressed the victory's narrow application.

"This decree is limited to the plaintiffs, but as precedent, and practically, its impact may prove orders of magnitude more powerful in protecting disfavored speech," Weingarten wrote, calling it "a momentous blow for the First Amendment."

National Institutes of Health Director Jay Bhattacharya, who had to withdraw as a plaintiff in the case after being appointed by the Trump administration, called the settlement "a huge win for all Americans."

"Huzzah! The consent decree in Missouri v. Biden is a historic victory for free speech in the US. Though I had to switch to the government side in the case after I became NIH director, I've never been more pleased by 'losing' in my life," he wrote.

Like Blaze News? Bypass the censors, sign up for our newsletters, and get stories like this direct to your inbox. Sign up here!

'Biden officials at the highest levels of government tried to use Facebook, X, and YouTube as their speech police,' Sen. Eric Schmitt said.

'Biden officials at the highest levels of government tried to use Facebook, X, and YouTube as their speech police,' Sen. Eric Schmitt said.

David GRAY/AFP/Getty Images

David GRAY/AFP/Getty Images

Photo by Stephen Maturen/Getty Images

Photo by Stephen Maturen/Getty Images

Current Ohio Attorney General Dave Yost (R); Bill Clark/CQ-Roll Call, Inc via Getty Images

Current Ohio Attorney General Dave Yost (R); Bill Clark/CQ-Roll Call, Inc via Getty Images

Photo by AaronP/Bauer-Griffin/GC Images/Getty Images

Photo by AaronP/Bauer-Griffin/GC Images/Getty Images

Photo by MARTIN BUREAU/AFP via Getty Images

Photo by MARTIN BUREAU/AFP via Getty Images

Photo by Arda Kucukkaya/Anadolu via Getty Images

Photo by Arda Kucukkaya/Anadolu via Getty Images Photographer: Kiyoshi Ota/Bloomberg via Getty Images

Photographer: Kiyoshi Ota/Bloomberg via Getty Images

Australian Prime Minister Anthony Albanese. Photo by DAVID GRAY / AFP via Getty Images.

Australian Prime Minister Anthony Albanese. Photo by DAVID GRAY / AFP via Getty Images.

Photo by DAVID GRAY/AFP via Getty Images

Photo by DAVID GRAY/AFP via Getty Images Photo by DAVID GRAY/AFP via Getty Images

Photo by DAVID GRAY/AFP via Getty Images