![]()

The Trump administration has unveiled a broad action plan for AI (America’s AI Action Plan). The general vibe is one of treating AI like a business, aiming to sell the AI stack worldwide and generate a lock-in for American technology. “Winning,” in this context, is primarily economic. The plan also includes the sorely needed idea of modernizing the electrical grid, a growing concern due to rising electricity demands from data centers. While any extra business is welcome in a heavily indebted nation, the section on the political objectivity of AI is both too brief and misunderstands the root cause of political bias in AI and its role in the culture war.

The plan uses the term "objective" and implies that a lack of objectivity is entirely the fault of the developer, for example:

Update Federal procurement guidelines to ensure that the government only contracts with frontier large language model (LLM) developers who ensure that their systems are objective and free from top-down ideological bias.

The fear that AIs might tip the scales of the culture war away from traditional values and toward leftism is real. Try asking ChatGPT, Claude, or even DeepSeek about climate change, where COVID came from, or USAID.

Training data is heavily skewed toward being generated during the 'woke tyranny' era of the internet.

This desire for objectivity of AI may come from a good place, but it fundamentally misconstrues how AIs are built. AI in general and LLMs in particular are a combination of data and algorithms, which further break down into network architecture and training methods. Network architecture is frequently based on stacking transformer or attention layers, though it can be modified with concepts like “mixture of experts.” Training methods are varied and include pre-training, data cleaning, weight initialization, tokenization, and techniques for altering the learning rate. They also include post-training methods, where the base model is modified to conform to a metric other than the accuracy of predicting the next token.

Many have complained that post-training methods like Reinforcement Learning from Human Feedback introduce political bias into models at the cost of accuracy, causing them to avoid controversial topics or spout opinions approved by the companies — opinions usually farther to the left than those of the average user. “Jailbreaking” models to avoid such restrictions was once a common pastime, but it is becoming harder, as corporate safety measures, sometimes as complex as entirely new models, scan both the input to and output from the underlying base model.

As a result of this battle between RLHF and jailbreakers, an idea has emerged that these post-training methods and safety features are how liberal bias gets into the models. The belief is that if we simply removed these, the models would display their true objective nature. Unfortunately for both the Trump administration and the future of America, this is only partially correct. Developers can indeed make a model less objective and more biased in a leftward direction under the guise of safety. However, it is very hard to make models that are more objective.

The problem is data

According to Google AI Mode vs. Traditional Search & Other LLMs, the top domains cited in LLMs are: Reddit (40%), YouTube (26%), Wikipedia (23%), Google (23%), Yelp (21%), Facebook (20%), and Amazon (19%).

This seems to imply a lot of the outside-fact data in AIs comes from Reddit. Spending trillions of dollars to create an “eternal Redditor” isn’t going to cure cancer. At best, it might create a “cure cancer cheerleader” who hypes up every advance and forgets about it two weeks later. One can only do so much in the algorithm layer to counteract the frame of mind of the average Redditor. In this sense, the political slant of LLMs is less due to the biases of developers and corporations (although they do exist) and more due to the biases of the training data, which is heavily skewed toward being generated during the "woke tyranny" era of the internet.

In this way, the AI bias problem is not about removing bias to reveal a magic objective base layer. Rather, it is about creating a human-generated and curated set of true facts that can then be used by LLMs. Using legislation to remove the methods by which left-leaning developers push AIs into their political corner is a great idea, but it is far from sufficient. Getting humans to generate truthful data is extremely important.

The pipeline to create truthful data likely needs at least four steps.

1. Raw data generation of detailed tables and statistics (usually done by agencies or large enterprises).

2. Mathematically informed analysis of this data (usually done by scientists).

3. Distillation of scientific studies for educated non-experts (in theory done by journalists, but in practice rarely done at all).

4. Social distribution via either permanent (wiki) or temporary (X) channels.

This problem of truthful data plus commentary for AI training is a government, philanthropic, and business problem.

RELATED: Threads is now bigger than X, and that’s terrible for free speech

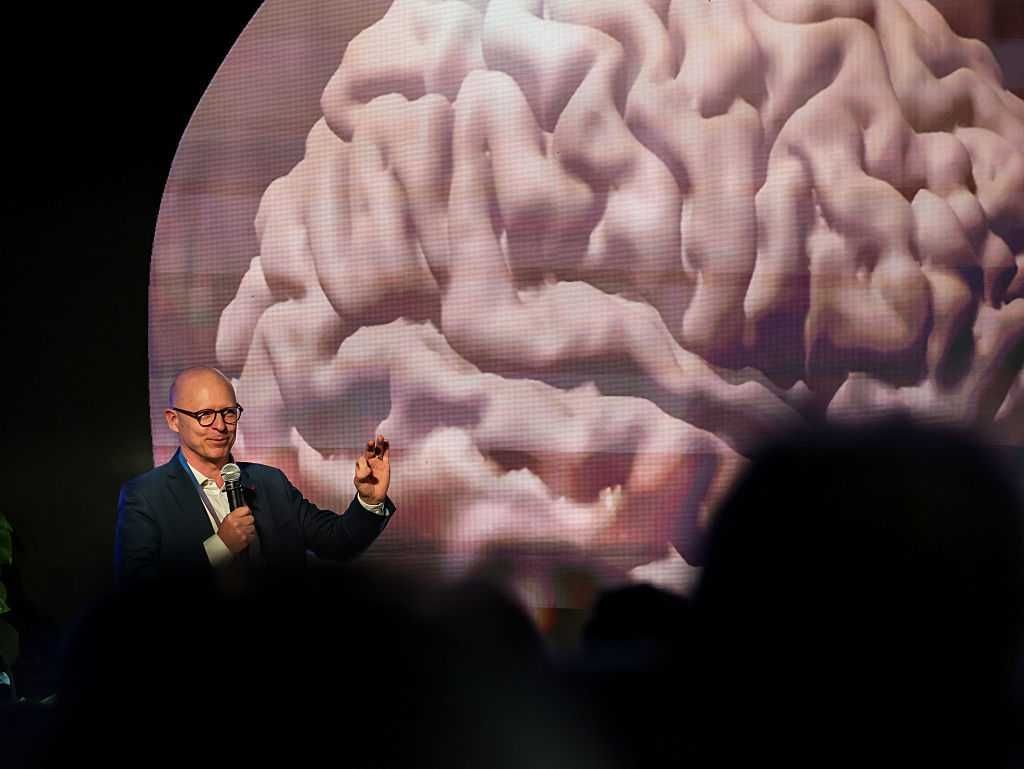

![]() Photo by Lionel BONAVENTURE/AFP/Getty Images

Photo by Lionel BONAVENTURE/AFP/Getty Images

I can imagine an idealized scenario in which all these problems are solved by harmonious action in all three directions. The government can help the first portion by forcing agencies to be more transparent with their data, putting it into both human-readable and computer-friendly formats. That means more CSVs, plain text, and hyperlinks and fewer citations, PDFs, and fancy graphics with hard-to-find data. FBI crime statistics, immigration statistics, breakdowns of government spending, the outputs of government-conducted research, minute-by-minute election data, and GDP statistics are fundamentally pillars of truth and are almost always politically helpful to the broader right.

In an ideal world, the distillation of raw data into causal models would be done by a team of highly paid scientists via a nonprofit or a government contract. This work is too complex to be left to the crowd, and its benefits are too distributed to be easily captured by the market.

The journalistic portion of combining papers into an elite consensus could be done similarly to today: with high-quality, subscription-based magazines. While such businesses can be profitable, for this content to integrate with AI, the AI companies themselves need to properly license the data and share revenue.

The last step seems to be mostly working today, as it would be done by influencers paid via ad revenue shares or similar engagement-based metrics. Creating permanent, rather than disappearing, data (à la Wikipedia) is a time-intensive and thankless task that will likely need paid editors in the future to keep the quality bar high.

Freedom doesn't always boost truth

However, we do not live in an ideal world. The epistemic landscape has vastly improved since Elon Musk's purchase of Twitter. At the very least, truth-seeking accounts don’t have to deal with as much arbitrary censorship. Even other media have made token statements claiming they will censor less, even as some AI “safety” features are ramped up to a much higher setting than social media censorship ever was.

The challenge with X and other media is that tech companies generally favor technocratic solutions over direct payment for pro-social content. There seems to be a widespread belief in a marketplace of ideas: the idea that without censorship (or with only some person’s favorite censorship), truthful ideas will win over false ones. This likely contains an element of truth, but the peculiarities of each algorithm may favor only certain types of truthful content.

“X is the new media” is a commonly spoken refrain. Yet both anonymous and public accounts on X are implicitly burdened with tasks as varied and complex as gathering election data, creating long think pieces, and the consistent repetition of slogans reinforcing a key message. All for a chance of a few Elon bucks. They are doing this while competing with stolen-valor thirst traps from overseas accounts. Obviously, most are not that motivated and stick to pithy and simple content rather than intellectually grounded think pieces. The broader “right” is still needlessly ceding intellectual and data-creation ground to the left, despite occasional victories in defunding anti-civilizational NGOs and taking control of key platforms.

The other issue experienced by data creators across the political spectrum is the reliance on unpaid volunteers. As the economic belt inevitably tightens and productive people have less spare time, the supply of quality free data will worsen. It will also worsen as both platforms and users feel rightful indignation at their data being “stolen” by AI companies making huge profits, thus moving content into gatekept platforms like Discord. While X is unlikely to go back to the “left,” its quality can certainly fall farther.

Even Redditors and Wikipedia contributors provide fairly complex, if generally biased, data that powers the entire AI ecosystem. Also for free. A community of unpaid volunteers working to spread useful information sounds lovely in principle. However, in addition to the decay in quality, these kinds of “business models” are generally very easy to disrupt with minor infusions of outside money, if it just means paying a full-time person to post. If you are not paying to generate politically powerful content, someone else is always happy to.

The other dream of tech companies is to use AI to “re-create” the entirety of the pipeline. We have heard so much drivel about “solving cancer” and “solving science.” While speeding up human progress by automating simple tasks is certainly going to work and is already working, the dream of full replacement will remain a dream, largely because of “model collapse,” the situation where AIs degrade in quality when they are trained on data generated by themselves. Companies occasionally hype up “no data/zero-knowledge/synthetic data” training, but a big example from 10 years ago, “RL from random play,” which worked for chess and Go, went nowhere in games as complex as Starcraft.

So where does truth come from?

This brings us to the recent example of Grokipedia. Perusing it gives one a sense that we have taken a step in the right direction, with an improved ability to summarize key historical events and medical controversies. However, a number of articles are lifted directly from Wikipedia, which risks drawing the wrong lesson. Grokipedia can’t “replace” Wikipedia in the long term because Grok’s own summarization is dependent on it.

Like many of Elon Musk’s ventures, Grokipedia is two steps forward, one step back. The forward steps are a customer-facing Wikipedia that seems to be of higher quality and a good example of AI-generated long-form content that is not mere slop, achieved by automating the tedious, formulaic steps of summarization. The backward step is a lack of understanding of what the ecosystem looks like without Wikipedia. Many of Grokipedia’s articles are lifted directly from Wikipedia, suggesting that if Wikipedia disappears, it will be very hard to keep neutral articles properly updated.

Even the current version suffers from a “chicken and egg” source-of-truth problem. If no AI has the real facts about the COVID vaccine and categorically rejects data about its safety or lack thereof, then Grokipedia will not be accurate on this topic unless a fairly highly paid editor researches and writes the true story. As mentioned, model collapse is likely to result from feeding too much of Grokipedia to Grok itself (and other AIs), leading to degradation of quality and truthfulness. Relying on unpaid volunteers to suggest edits creates a very easy vector for paid NGOs to influence the encyclopedia.

The simple conclusion is that to be good training data for future AIs, the next source of truth must be written by people. If we want to scale this process and employ a number of trustworthy researchers, Grokipedia by itself is very unlikely to make money and will probably forever be a money-losing business. It would likely be both a better business and a better source of truth if, instead of being written by AI to be read by people, it was written by people to be read by AI.

Eventually, the domain of truth needs to be carefully managed, curated, and updated by a legitimate organization that, while not technically part of the government, would be endorsed by it. Perhaps a nonprofit NGO — except good and actually helping humanity. The idea of “the Foundation” or “Antiversity,” is not new, but our over-reliance on AI to do the heavy lifting is. Such an institution, or a series of them, would need to be bootstrapped by people willing to invest in our epistemic future for the very long term.

Photo by Lionel BONAVENTURE/AFP/Getty Images

Photo by Lionel BONAVENTURE/AFP/Getty Images

Photo by: Nano Calvo/VW Pics/Universal Images Group via Getty Images

Photo by: Nano Calvo/VW Pics/Universal Images Group via Getty Images

Photo by Chris Haston/WBTV via Getty Images

Photo by Chris Haston/WBTV via Getty Images

Photo by Samuel Boivin/NurPhoto via Getty Images

Photo by Samuel Boivin/NurPhoto via Getty Images

Hongqi Bridge over the Songhua River is under construction on April 1, 2024, in Jilin City, Jilin Province of China. (Photo by Zhang Jingfeng/VCG via Getty Images)

Hongqi Bridge over the Songhua River is under construction on April 1, 2024, in Jilin City, Jilin Province of China. (Photo by Zhang Jingfeng/VCG via Getty Images)